Overview

The MetaONNX Framework

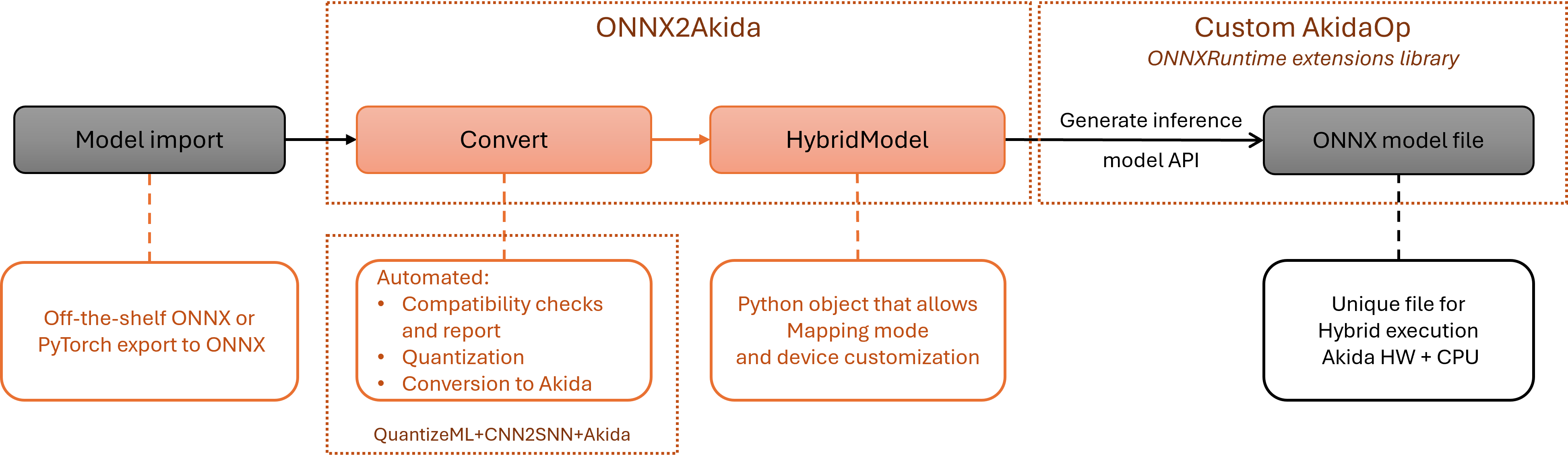

The MetaONNX Framework is a dedicated toolchain designed specifically for deploying ONNX models on the Akida 2nd Generation Neuromorphic Processor. MetaONNX enables the execution of any ONNX model regardless of operator support on Akida hardware by combining neuromorphic acceleration with CPU fallback execution for operators that are not supported by Akida. Built on the industry-standard ONNX format, MetaONNX provides tools for analyzing model compatibility, automatically partitioning models into Akida-accelerated and CPU-executed subgraphs, and generating heterogeneous inference models that maximize hardware utilization while ensuring complete model execution.

MetaONNX is based on the onnx2akida Python package installed from the PyPI repository via the pip command. The framework provides:

ONNX model ingestion - accepts any valid ONNX model as input, regardless of operator support on Akida hardware,

automatic graph partitioning - analyzes the model graph and intelligently partitions it into subgraphs for optimal execution on Akida hardware and CPU,

AkidaOp library - a core component that identifies and assigns supported neural network operators to the Akida 2nd Generation hardware,

CPU fallback execution - ensures operators not supported by Akida can be executed on CPU,

device estimation tools - determines the minimum Akida hardware configuration required for a given model,

developer-friendly APIs - Python API and CLI tools (onnx2akida, onnx2akida-device) integrated with ONNX Runtime for seamless deployment workflows.

The onnx2akida toolkit works with models from any framework that supports ONNX export, including TensorFlow (via tf2onnx), PyTorch (via torch.onnx), and Hugging Face models (via Optimum). This broad compatibility enables developers to bring their existing models to the Akida platform without being constrained by hardware-specific operator limitations.

MetaONNX execution flow

The MetaONNX examples

The examples section includes tutorials demonstrating the onnx2akida workflow on various model architectures. These examples illustrate:

How to analyze ONNX model compatibility with Akida hardware

Converting models from popular frameworks (TensorFlow, PyTorch, Hugging Face)

Generating and using hybrid inference models

Estimating hardware requirements for deployment

Warning

While the MetaONNX examples are provided under an Apache License 2.0, the underlying Akida library is proprietary. Please refer to the End User License Agreement for terms and conditions.